What percentage of Americans believe in human-caused climate change?

The answer depends on what is asked – and how. In a new study, researchers at the Annenberg Public Policy Center (APPC) of the University of Pennsylvania found that “seemingly trivial decisions made when constructing questions can, in some cases, significantly alter the proportion of the American public who appear to believe in human-caused climate change.”

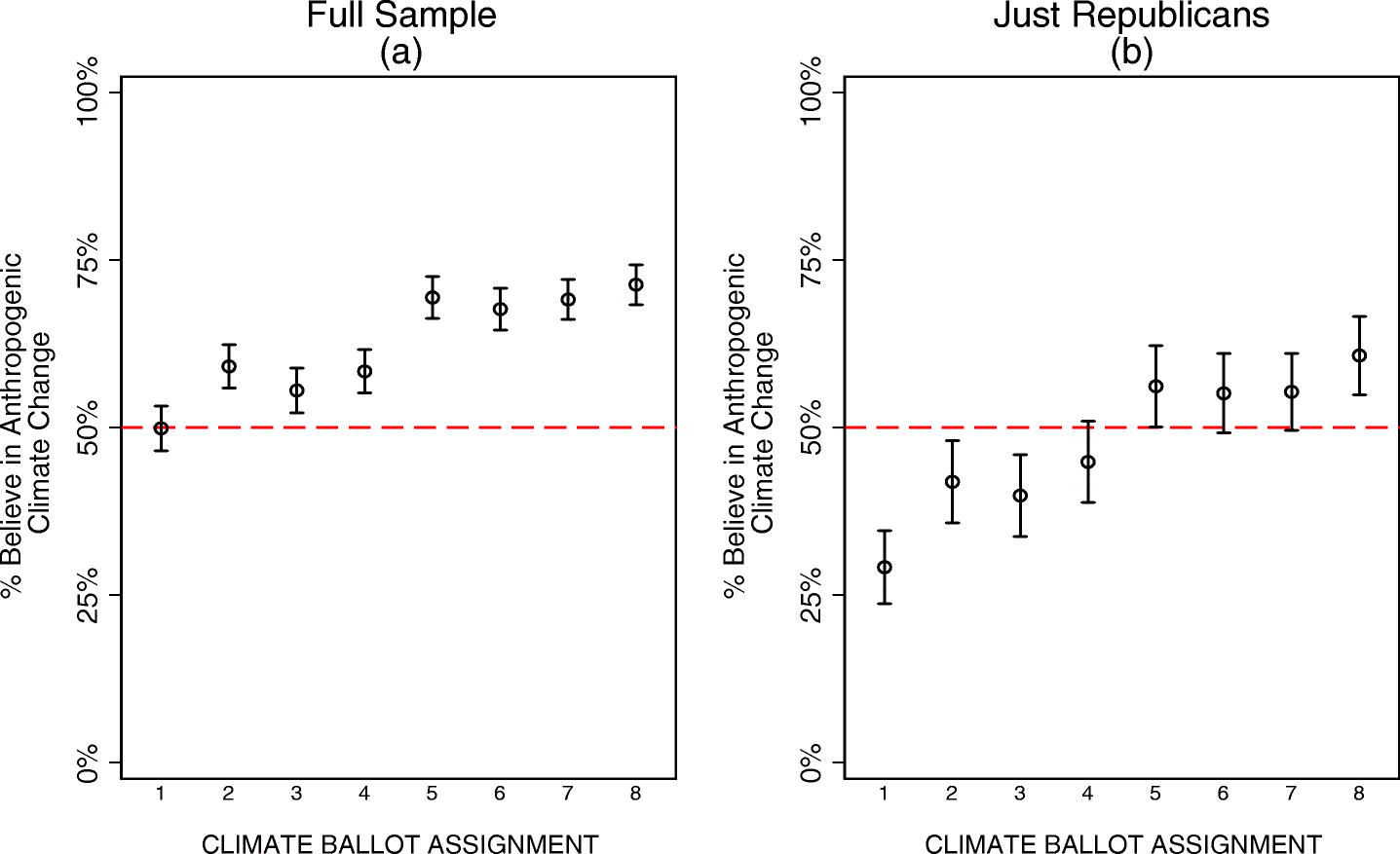

Surveying more than 7,000 people, the researchers found that the proportion of Americans who believe that climate change is human-caused ranges from 50 percent to 71 percent, depending on the question format. And the number of self-identified Republicans who say they accept climate change as human-caused varied even more dramatically, from 29 percent to 61 percent.

“People’s beliefs about climate change play an important role in how they think about solutions to it,” said the lead author, Matthew Motta, one of four APPC postdoctoral fellows who conducted the study. “If we can’t accurately measure those beliefs, we may be under- or overestimating their support for different solutions. If we want to understand why the public supports or opposes different policy solutions to climate change, we need to understand what their views are on the science.”

The study, published in Climatic Change, is based on an online survey of 7,019 U.S. adults conducted from September 11-18, 2018, who were presented with questions in one of eight formats.

Three ways of asking

The study tested three variations in question format in different combinations, in which respondents were:

- Given the option to respond with a choice of “don’t know” or allowed to just skip the question (a “hard” don’t know vs. a “soft” don’t know);

- Provided with explanatory text saying that climate change is caused by greenhouse gas emissions, or given no additional text apart from the question;

- Presented with discrete, multiple-choice responses and asked to pick the one that comes closest to their views – or shown a statement and asked how strongly they agreed or disagreed with it, using a seven-point agree-disagree scale

The two extremes

Two formats produced the most extreme results:

- The “Pew Style” approach, which uses a clear “don’t know” option, no explanatory text, and a discrete choice among statements as to which best represents your views, produced the lowest acceptance of human-caused climate change: 50 percent of U.S. adults and just 29 percent of Republicans.

- The van Boven et al. approach cited by Leaf van Boven and David Sherman in a 2018 New York Times op-ed, “Actually, Republicans Do Believe in Climate Change.” This approach uses an agree-disagree scale and explanatory text and does not offer a “don’t know” option. In the present study, this format combination found that 71 percent of U.S. adults believe in human-caused climate change and 61 percent of Republicans – the highest level of acceptance among the eight question formats studied.

The researchers said that the differences show how question construction can produce widely varying reports about what the public purportedly thinks. But they cautioned that because the respondents in this study were not a representative sample of the U.S. adult population, the raw estimates can’t be read as definitively reflecting the acceptance of anthropogenic climate change (ACC).

How format choices matter

While other differences in wording and question structure have been studied, the researchers said these three choices in format have not been examined closely. Questions that lack a hard “don’t know” response may nudge participants to pick a response that doesn’t reflect their lack of an opinion – and thereby inflate acceptance of human-caused climate change. Likewise, they said questions that use explanatory text may push respondents toward a greater acceptance.

However, they found that the most substantively and statistically significant increases in belief in climate change came from the use of an agree-disagree scale (so-called Likert-style response options) instead of distinct choices in response. In other fields, the researchers wrote, the agree-disagree format has been shown to introduce acquiescence bias, which occurs when respondents “agree” with a statement in order to “avoid thinking deeply about the matter at hand” or “avoid appearing disagreeable to the interviewer…”

Question wording & response option effects across experimental conditions. Hollow circles correspond to mean levels of agreement with anthropogenic climate change, observed across experimental conditions. 95% confidence intervals extend out from each one. These analyses use weighted survey data and do not include Independents who “lean” toward one party over the other. For additional information see the Supplementary Materials. Conditions: (1) Discrete, Hard DK [Don’t Know], No Explainer; (2) Discrete, Soft DK, No Explainer; (3) Discrete, Hard DK, Explainer; (4) Discrete, Soft DK, Explainer; (5) Likert, Hard DK, No Explainer; (6) Likert, Soft DK, No Explainer; (7) Likert, Hard DK, Explainer; (8) Likert, Soft DK, Explainer.

The researchers said that they found no differences in the way that these question format changes affected Republicans and Democrats. “We hope that our research can help to broadly raise awareness of measurement issues in the study of climate change opinion and alert scholars to which specific design elements are most likely to impact opinion estimates,” the researchers said.

They added that additional research is needed to understand the psychological mechanisms underlying the effects seen here.

In addition to Motta, the study was written by Annenberg Public Policy Center postdoctoral fellows Daniel Chapman, Dominik Stecuła, and Kathryn Haglin. “An experimental examination of measurement disparities in public climate change beliefs” is published in Climatic Change.

Download this news release here.